Hadoop Software

Conquering the Data Avalanche: Why Apache Hadoop Software is Still King

The world generates data faster than ever before. If your business deals with terabytes—or even petabytes—of information, you've likely encountered a major roadblock: traditional database systems simply cannot handle the sheer volume and velocity. This challenge is precisely why the Apache Foundation developed the groundbreaking Hadoop Software.

Hadoop isn't just a single piece of technology; it's a revolutionary framework designed to store and process enormous datasets across clusters of commodity hardware. Think of it as a massive, scalable data processing engine that treats failure as the norm, not the exception. In this deep dive, we'll peel back the layers of Hadoop, explore its core components, and evaluate its critical relevance in today's cloud-centric data landscape.

The Hadoop Blueprint: What Exactly is This Distributed Framework?

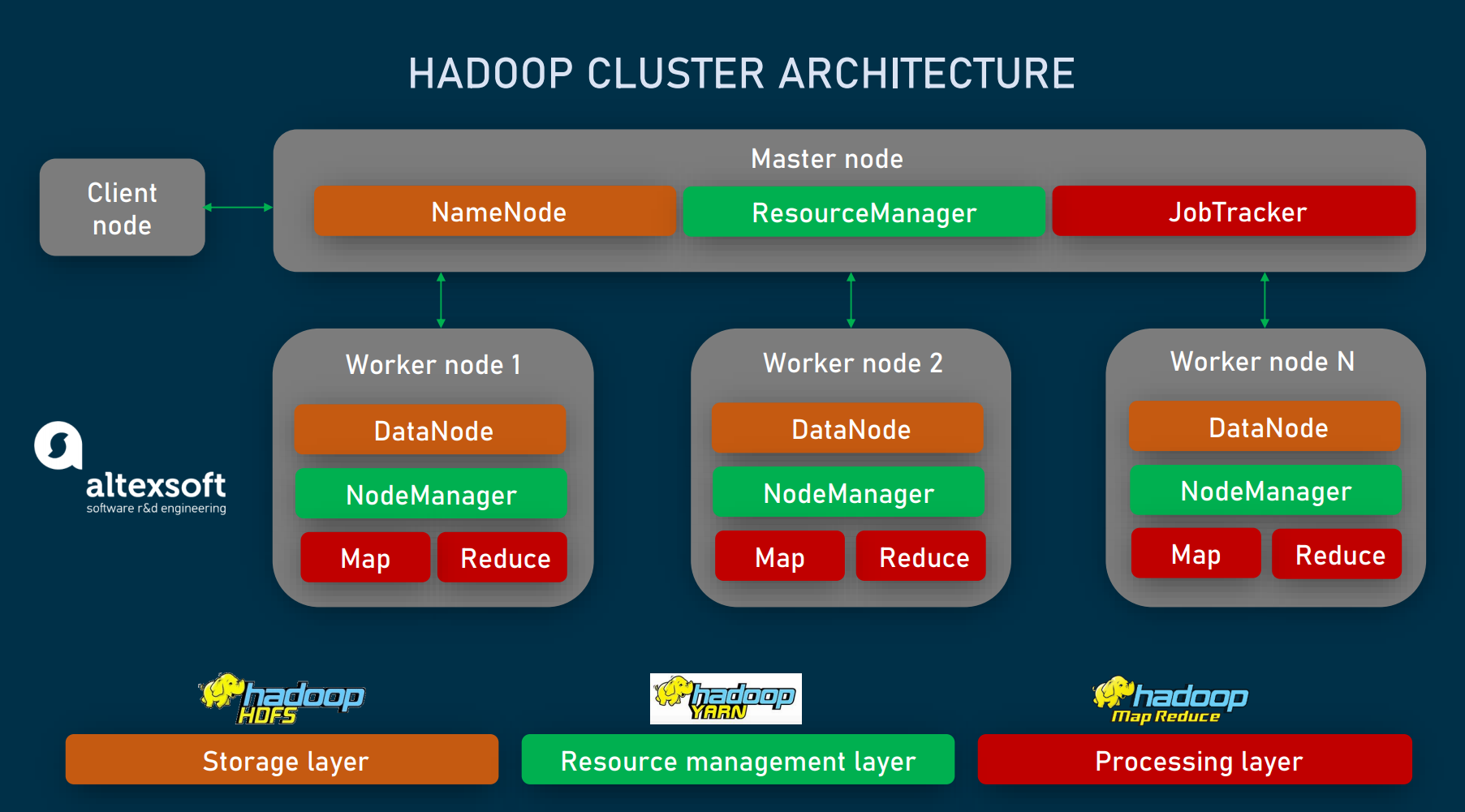

At its heart, Hadoop is an open-source framework, written in Java, that allows for the distributed processing of large datasets across computer clusters. Instead of relying on one massive, expensive machine, Hadoop splits the data and computational load across hundreds or thousands of cheaper, readily available servers.

This approach offers two enormous advantages: scalability (you just add more machines) and resilience (if one machine fails, the data is safe and the process continues).

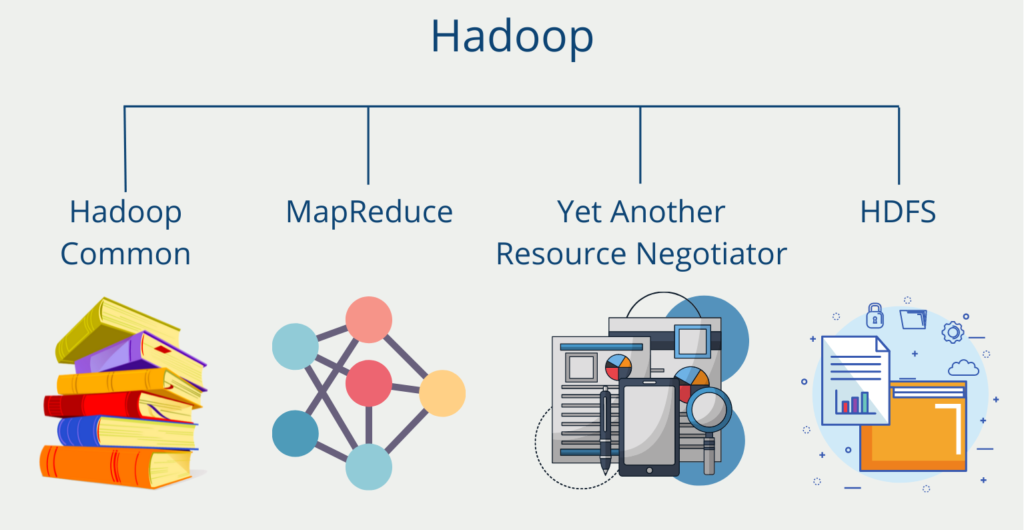

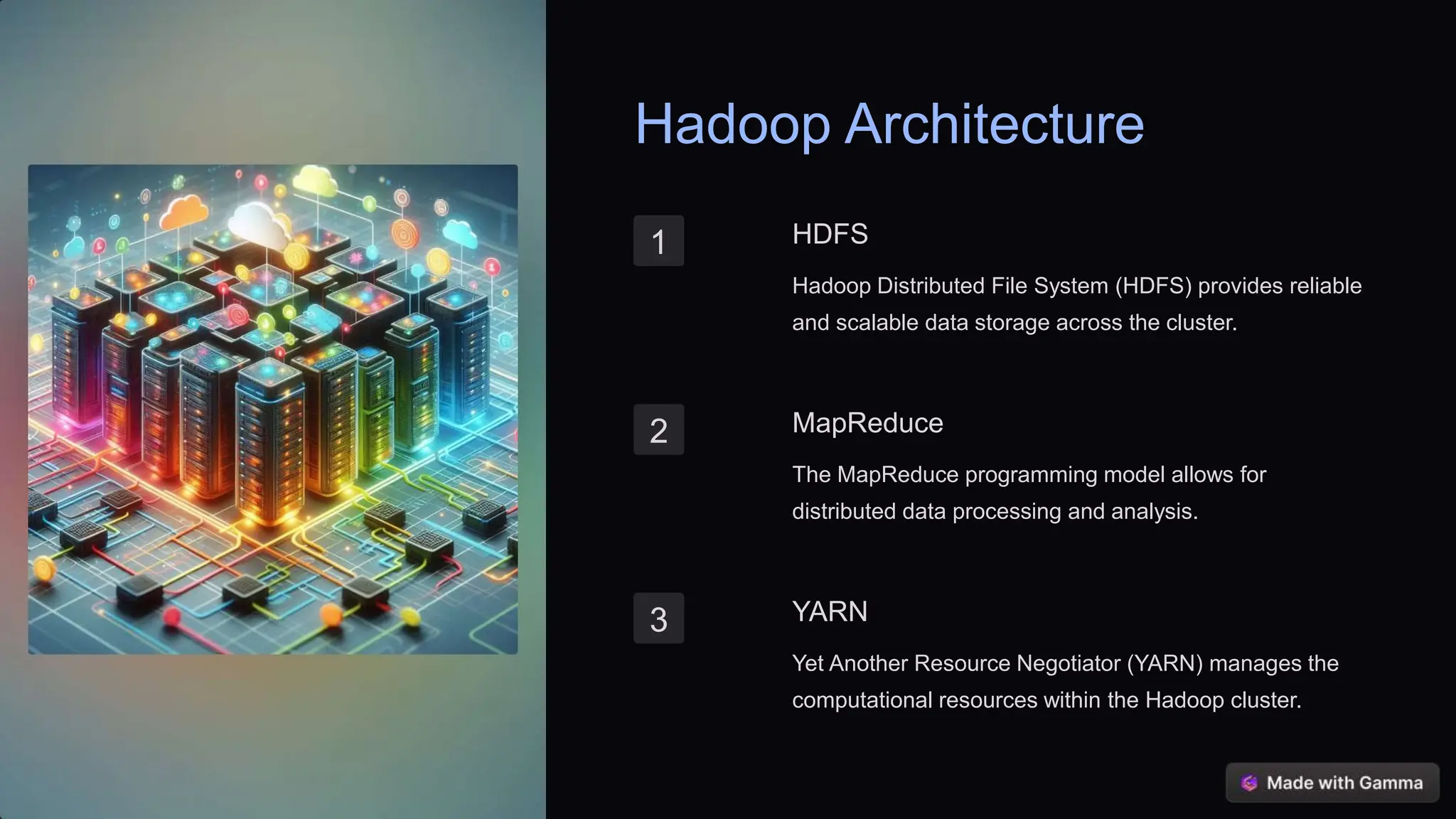

The Four Pillars of Core Hadoop

To truly understand how Hadoop Software functions, you must grasp its four foundational modules:

- Hadoop Distributed File System (HDFS): The primary storage layer. HDFS is optimized for high throughput of data access and operates on the principle of storing data blocks across multiple nodes for fault tolerance.

- MapReduce: The original processing engine. It provides a programming model for performing parallel computations over vast data sets in a distributed manner.

- YARN (Yet Another Resource Negotiator): Introduced in Hadoop 2, YARN is the cluster resource management system. It schedules jobs and allocates resources (CPU, memory) dynamically across the cluster, vastly improving efficiency and flexibility.

- Hadoop Common: These are the essential Java libraries and utilities that support the other modules.

For a detailed history of its development and design principles, you can refer to authoritative sources like Wikipedia's entry on Apache Hadoop.

Decrypting the Core: HDFS and MapReduce Explained

These two components are where the magic truly happens. They dictate how data is stored and how tasks are executed in parallel.

HDFS: Reliability Through Replication

Imagine breaking a huge novel into small chapters and keeping three identical copies of each chapter in three different libraries. That's HDFS at work. When data is ingested, HDFS splits it into blocks (typically 128MB or 256MB) and replicates those blocks across three or more separate DataNodes.

This replication factor (known as RF=3) ensures data safety. If a machine crashes, HDFS automatically recovers the missing block from another copy and initiates a new replication elsewhere. This is what makes Hadoop so robust and cost-effective.

MapReduce: The Parallel Processing Engine

MapReduce defines how complex processing tasks are broken down. It involves two main phases:

- Map Phase: Data is filtered and sorted, resulting in key-value pairs. Each node works independently on its local data blocks.

- Reduce Phase: The sorted results from the Map phase are aggregated, summarized, and combined to produce the final output.

While the original MapReduce framework is foundational, modern big data pipelines often leverage faster, in-memory processing tools running on YARN, such as Apache Spark, for iterative and real-time processing, though MapReduce remains a crucial concept. If you want to dive deeper into the technical specifications of distributed processing algorithms, consult research papers on the topic: MapReduce: Simplified Data Processing on Large Clusters.

Hadoop's Relevance in the Cloud Era: Is It Dying?

This is the million-dollar question. With the rise of proprietary cloud data warehouses (like Snowflake or Google BigQuery), many people wonder if on-premise Hadoop Software implementations are obsolete. The short answer is: No, not at all.

While cloud services offer unparalleled ease of use, Hadoop remains critical for specific use cases, particularly where cost efficiency on massive scale (multi-petabytes) and regulatory control (data residency) are paramount.

Moreover, modern cloud architectures often run Hadoop components (like HDFS and YARN) on cloud infrastructure (e.g., EMR on AWS or HDInsight on Azure), demonstrating a synergy rather than a replacement.

Hadoop vs. Cloud Data Warehouses: A Snapshot

Understanding the difference often comes down to cost and flexibility:

| Feature | Hadoop Ecosystem | Cloud Data Warehouses (e.g., Snowflake) |

|---|---|---|

| Data Structure | Schema-on-Read (Highly flexible for unstructured data) | Schema-on-Write (Typically structured/semi-structured) |

| Primary Cost Driver | Hardware acquisition and maintenance (CapEx) | Compute usage (Pay-as-you-go OpEx) |

| Processing Speed (Typical) | High latency batch processing (optimized for throughput) | Low latency analytical querying |

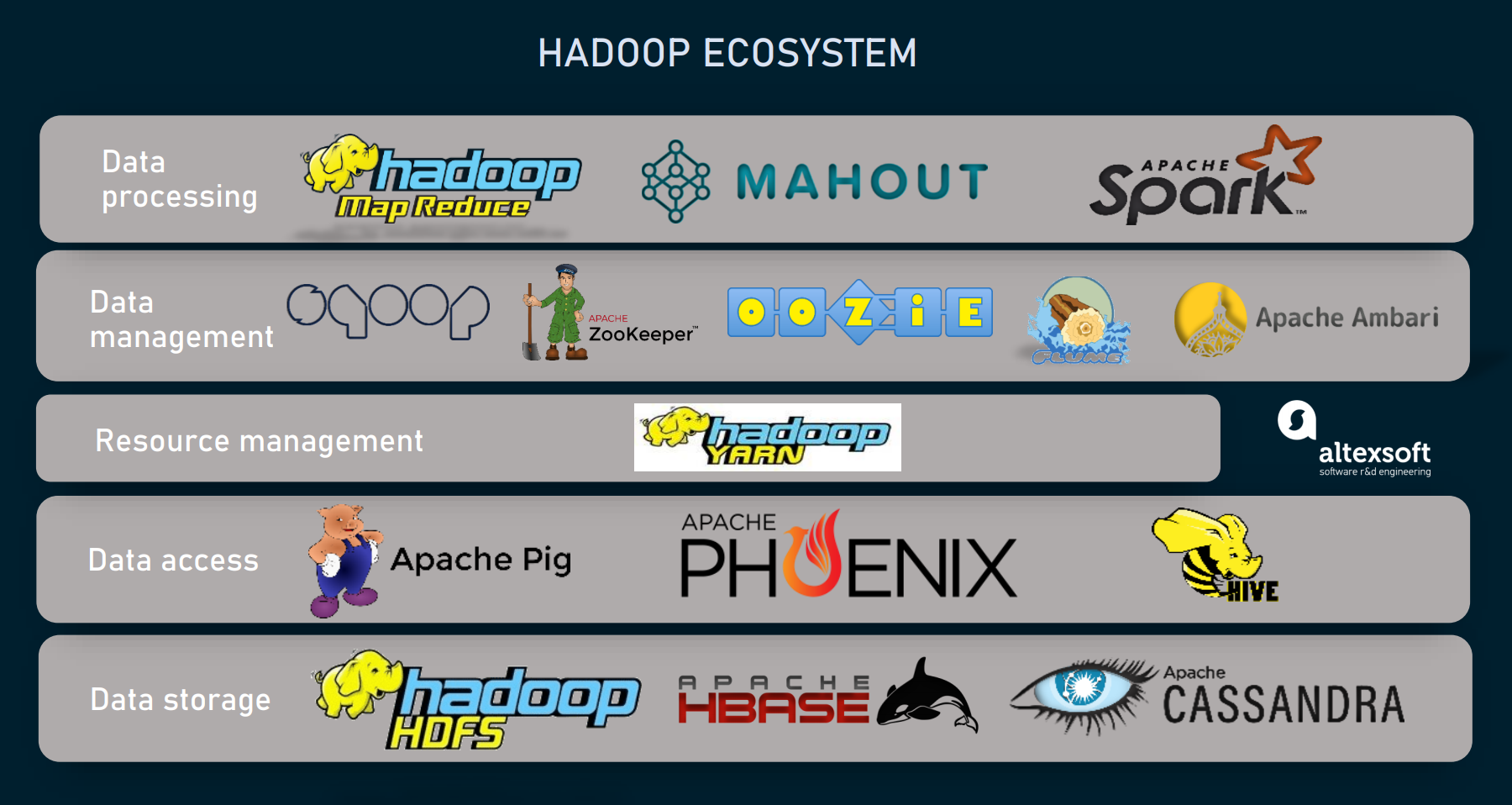

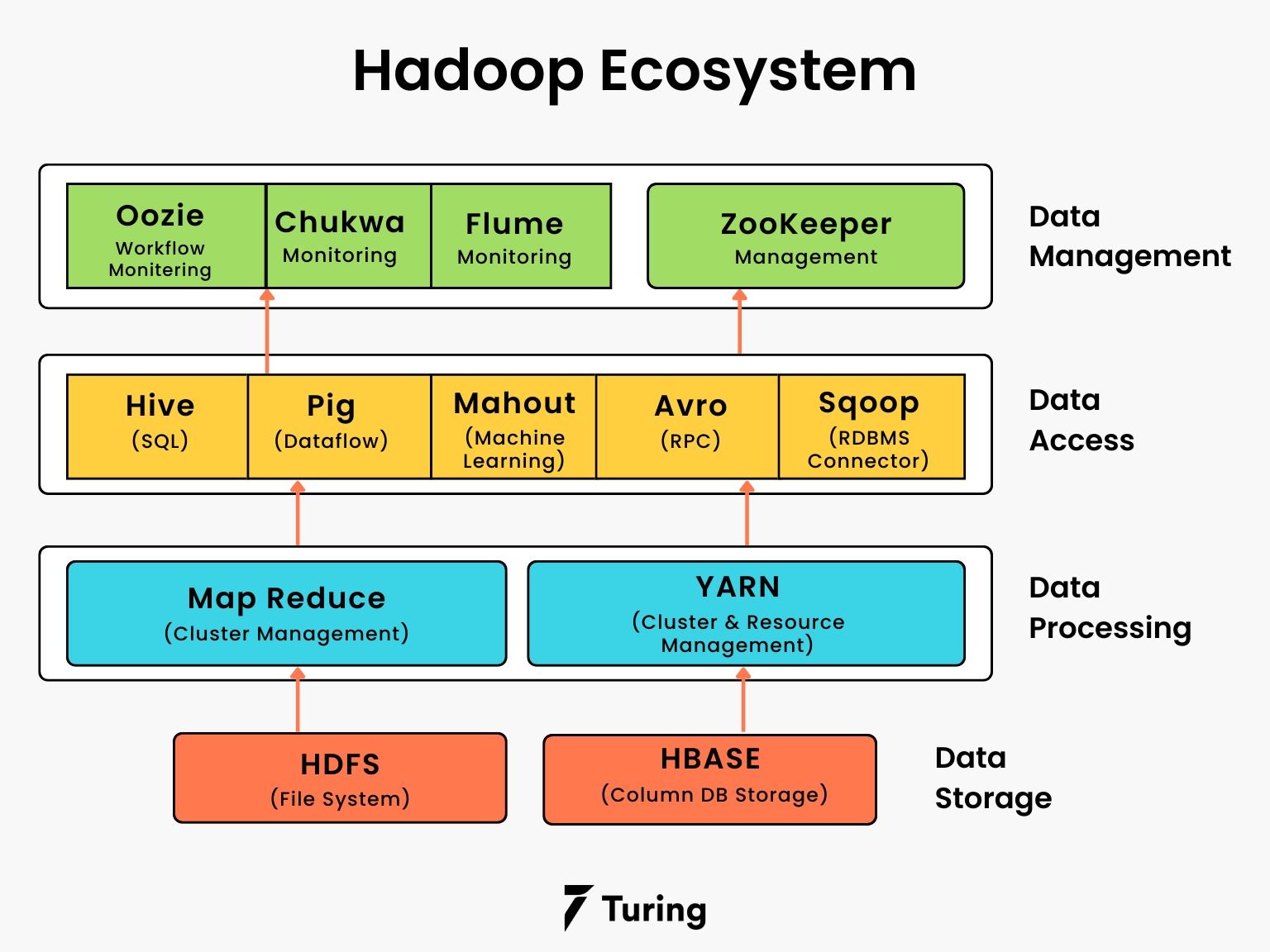

The Ecosystem Effect: Beyond HDFS

Hadoop's true power lies in its extensive ecosystem. Tools like Hive (for SQL querying), Pig (for procedural programming), and most importantly, [Baca Juga: Apache Spark In Depth], utilize YARN to leverage the massive storage provided by HDFS, creating a comprehensive platform for data ingestion, cleaning, analysis, and machine learning.

Real-World Impact: Who Uses Hadoop Software?

Many of the world's largest data generators rely heavily on Hadoop Software to handle their operations. The key industries benefiting from Hadoop's scalability are:

1. Social Media Giants: Companies like Facebook (which contributed significantly to Hadoop's development) use it to store and analyze user activity, clickstream data, and billions of daily interactions for targeted advertising and content recommendation.

2. Financial Services: Banks use Hadoop clusters for high-volume fraud detection, risk modeling, and regulatory compliance reporting that requires processing years of transactional data quickly.

3. Telecommunications: Telcos analyze massive call detail records (CDRs) and network performance data to optimize service quality and predict network bottlenecks.

The commitment to the framework from major players like Yahoo (where it originated) and current contributors illustrates its stability and reliability in industrial settings. You can track the current state of the Apache project here: The Apache Hadoop Project Official Site.

If your goal is to transition into [Baca Juga: Data Science Career Path], mastering the fundamentals of distributed processing via Hadoop and its ecosystem partners (like Spark) remains a highly valued skill.

Conclusion: The Enduring Legacy of Hadoop

Apache Hadoop Software revolutionized the way enterprises approach big data. By decoupling storage (HDFS) from processing (MapReduce/YARN) and embracing distributed, commodity computing, it made handling petabytes of unstructured data feasible and affordable.

While the tools built around Hadoop (like Spark and various cloud services) have evolved and often replaced the original MapReduce function, Hadoop's fundamental principles—resource management via YARN, and robust, cost-effective distributed storage via HDFS—ensure its continued relevance as a foundational technology for complex data infrastructure globally.

Frequently Asked Questions (FAQ) About Hadoop Software

- Is Hadoop still necessary if I use a modern Cloud Data Lake?

Yes. Many cloud data lakes (like AWS S3 or Azure Data Lake Storage) provide the storage layer, but tools like AWS EMR still utilize YARN and other components derived from the Hadoop ecosystem for resource management and processing large batches of data.

- What is the biggest difference between Hadoop and traditional RDBMS?

Traditional Relational Database Management Systems (RDBMS) scale vertically (adding more power to a single server), handle structured data well, and prioritize low latency transactional queries. Hadoop scales horizontally (adding more servers), excels at high throughput batch processing of unstructured data, and prioritizes fault tolerance.

- What is the best way to start learning Hadoop Software today?

Focus on understanding the core architecture (HDFS and YARN). Then, immediately move on to learning Apache Spark, as it is the current industry standard engine used for processing data stored within Hadoop clusters. Learning Hive for SQL access is also highly recommended.

Hadoop Software

Hadoop Software Wallpapers

Collection of hadoop software wallpapers for your desktop and mobile devices.

Beautiful Hadoop Software Image Art

Experience the crisp clarity of this stunning hadoop software image, available in high resolution for all your screens.

Amazing Hadoop Software Artwork in 4K

Transform your screen with this vivid hadoop software artwork, a true masterpiece of digital design.

Detailed Hadoop Software Design in HD

Transform your screen with this vivid hadoop software artwork, a true masterpiece of digital design.

Vibrant Hadoop Software Moment Photography

Discover an amazing hadoop software background image, ideal for personalizing your devices with vibrant colors and intricate designs.

Lush Hadoop Software View Photography

A captivating hadoop software scene that brings tranquility and beauty to any device.

Artistic Hadoop Software Moment Illustration

Immerse yourself in the stunning details of this beautiful hadoop software wallpaper, designed for a captivating visual experience.

Lush Hadoop Software Scene Nature

Immerse yourself in the stunning details of this beautiful hadoop software wallpaper, designed for a captivating visual experience.

Beautiful Hadoop Software Landscape Concept

Experience the crisp clarity of this stunning hadoop software image, available in high resolution for all your screens.

Detailed Hadoop Software Scene Art

Transform your screen with this vivid hadoop software artwork, a true masterpiece of digital design.

Beautiful Hadoop Software Landscape Photography

Transform your screen with this vivid hadoop software artwork, a true masterpiece of digital design.

Dynamic Hadoop Software Capture Photography

Immerse yourself in the stunning details of this beautiful hadoop software wallpaper, designed for a captivating visual experience.

Breathtaking Hadoop Software Background Collection

Explore this high-quality hadoop software image, perfect for enhancing your desktop or mobile wallpaper.

Lush Hadoop Software Scene Digital Art

Find inspiration with this unique hadoop software illustration, crafted to provide a fresh look for your background.

Beautiful Hadoop Software Background Nature

Discover an amazing hadoop software background image, ideal for personalizing your devices with vibrant colors and intricate designs.

Spectacular Hadoop Software View Collection

Immerse yourself in the stunning details of this beautiful hadoop software wallpaper, designed for a captivating visual experience.

Spectacular Hadoop Software Scene Digital Art

This gorgeous hadoop software photo offers a breathtaking view, making it a perfect choice for your next wallpaper.

Spectacular Hadoop Software Abstract Collection

This gorgeous hadoop software photo offers a breathtaking view, making it a perfect choice for your next wallpaper.

Captivating Hadoop Software Photo Digital Art

Immerse yourself in the stunning details of this beautiful hadoop software wallpaper, designed for a captivating visual experience.

Spectacular Hadoop Software Wallpaper Nature

This gorgeous hadoop software photo offers a breathtaking view, making it a perfect choice for your next wallpaper.

Captivating Hadoop Software Design in HD

This gorgeous hadoop software photo offers a breathtaking view, making it a perfect choice for your next wallpaper.

Download these hadoop software wallpapers for free and use them on your desktop or mobile devices.